Why this matters

AI adoption in sales has moved beyond experimentation.

Research from McKinsey shows that nearly 90 percent of organisations now use AI in at least one business function, with sales and marketing among the most common areas of deployment. At the same time, industry data shows that many sales professionals now rely on AI daily to assist with research, outreach, forecasting, and proposal preparation.

AI is no longer an isolated productivity tool. It increasingly influences the commitments organisations make to their clients.

When AI shapes pricing logic, forecasts, or contractual language, the question shifts from efficiency to responsibility. This is why AI sales governance is so critical.

The same tension is visible in AI sales pricing, where faster recommendations only create value if accountability and value protection remain explicit.

Where the real shift is happening

AI is now embedded in everyday commercial activity:

- lead qualification

- opportunity scoring

- RFx drafting

- CRM enrichment

- forecast analysis

These uses accelerate execution and expand analytical capacity. But they also introduce a structural challenge.

If AI contributes to decisions that ultimately become commercial commitments, leadership must ensure those commitments remain accountable, explainable, and defensible.

Speed without governance creates exposure.

That is also consistent with the NIST AI Risk Management Framework, which stresses governance, human oversight, and accountability in AI-enabled decisions.

Data maturity precedes AI maturity

AI does not fix weak foundations. It amplifies them.

Organisations generating measurable value from AI consistently show stronger data governance, clearer KPIs, and explicit ownership structures. When underlying systems are inconsistent, AI accelerates the spread of errors rather than correcting them.

In practical terms:

If CRM data is unreliable, AI-driven segmentation becomes misleading. If pricing logic is inconsistent, AI-generated proposals replicate that confusion. If ownership is unclear, AI-driven decisions become difficult to defend.

This is also why AI sales segmentation cannot be treated as a pure data exercise: weak foundations distort prioritization from the start.

AI magnifies the quality of existing systems. It does not compensate for their weaknesses.

Why AI Sales Governance Requires Clear Accountability

Human ownership must remain explicit

AI can support analysis and accelerate preparation, but commercial commitments must always have a clearly identified human owner.

Every AI-influenced output that affects a client commitment should include:

- a named responsible owner

- a validation step

- traceable assumptions

This is not bureaucracy. It is commercial hygiene.

Governance must precede scale

Organisations that generate sustained value from AI define governance structures early.

Before scaling AI across sales workflows, leadership should define:

- approved use cases

- data boundaries

- validation thresholds

- escalation mechanisms

Experimentation without governance creates hidden liabilities.

Contracts must reflect delivery reality

AI increasingly contributes to service design, forecasting, and proposal preparation. Contracts must remain grounded in operational reality rather than automated convenience.

A gap between how services are actually delivered and how commitments are written is where risk accumulates.

AI does not change the fundamentals of strong commercial agreements. It makes discipline more important.

From the field

In one global sales organisation, AI significantly reduced RFx drafting time. Productivity improved quickly.

However, legal reviews later revealed inconsistencies in contractual clauses generated through automated workflows.

The issue was not the tool itself. It was the absence of validation gates. The same issue appears in AI RFx responses, where speed is useful only if security, validation, and ownership remain controlled.

After introducing structured prompt libraries, defined legal checkpoints, and explicit ownership of AI-generated outputs, cycle times remained faster while contractual risk decreased.

Speed was preserved. Exposure was reduced.

What to remember

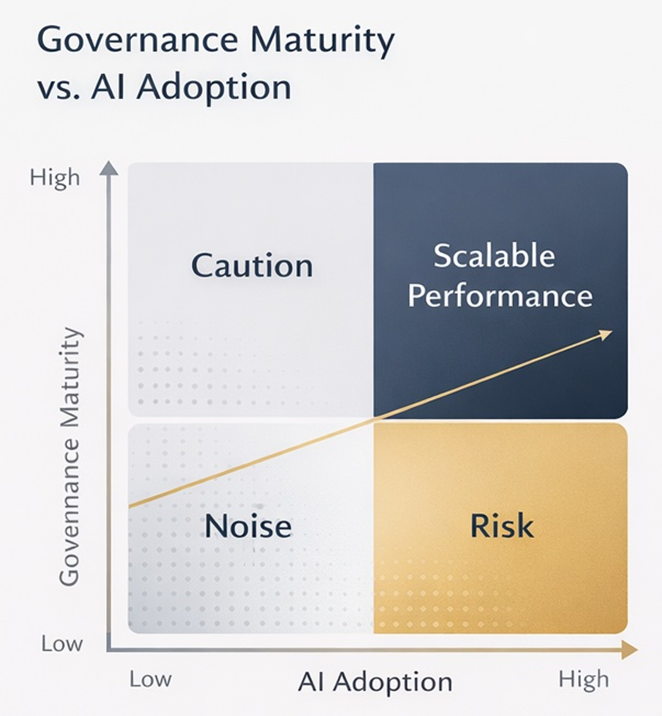

AI increases speed.

Governance determines whether that speed becomes scalable advantage or uncontrolled risk.

AI does not create performance on its own. It creates leverage. The quality of leadership, data, and accountability determines the outcome.